"AI-Powered" is Not a Solution: Avoiding Buzzwords in Pitch Decks

"AI-Powered" is a Series A red flag. Stop using buzzwords that signal laziness. Master the 3-Clause Mechanism Framework to prove your technical moat now.

2.3 HOW TO FRAME THE SOLUTION SLIDE (WITHOUT OVERCLAIMING)

2/18/20266 min read

"AI-Powered" is Not a Solution: Avoiding Buzzwords in Pitch Decks

Most founders think adding "AI-powered" to their Solution Slide makes their pitch stronger. In 2026, it does the opposite. The phrase has been so systematically overused across every vertical — from HR tech to supply chain to legal — that it now functions as an automatic credibility discount in the minds of most Series A partners. It does not describe a solution. It describes an implementation method that 4,000 other companies are also claiming on their own Solution Slides this quarter. Calling your product "AI-powered" without specifying what the AI does, to what outcome, and why that outcome is difficult to replicate is the pitch deck equivalent of listing "uses electricity" as a competitive advantage. If your Problem Slide is not yet structured to support a claim beyond the buzzword layer, what makes a real Problem Slide and how investors actually judge it is where the diagnostic work begins.

Why "AI-Powered" Has Become a Series A Red Flag, Not a Signal of Sophistication

There is a precise moment when a category descriptor stops being useful and starts being a liability. For "AI-powered," that moment passed somewhere in late 2023. By the time the 2024–2026 vintage funds began deploying capital in earnest, the phrase had accumulated enough noise across enough failed decks that senior partners at top-tier US and UK funds began explicitly flagging it as a diligence concern — not because AI is unimportant, but because the phrase now signals that the founder has not done the harder work of articulating what specifically the AI enables.

The pattern is consistent and the failure mode is precise: a founder building a genuinely differentiated product reaches the Solution Slide and defaults to "AI-powered" as a shorthand for a technical architecture they assume the VC will find impressive. The VC does not find it impressive. The VC finds it indistinguishable from the other eleven decks they reviewed that week that also claimed to be AI-powered. The phrase has become categorical wallpaper — it covers the wall but communicates nothing about the structure behind it.

I have reviewed sixteen decks this year where "AI-powered," "machine learning-driven," or "LLM-native" appeared in the Solution Slide headline without a single follow-on clause explaining what the AI component specifically does that a non-AI alternative could not. Thirteen of those founders did not advance past the first call. The psychological origin is a form of borrowed credibility: the founder knows AI is a fundable space, so they lead with the category rather than the mechanism. The VC sees through this immediately because they are not evaluating the category — they are evaluating whether this specific team has a specific technical insight that produces a specific outcome that the market cannot easily replicate. "AI-powered" answers none of those questions.

The Buzzword Discount: Calculating the Valuation Cost of Vague Technical Claims

Vague technical language does not just fail to impress — it imposes a quantifiable cost on how VCs model your defensibility.

As of early 2026, top-tier US and UK funds stress-testing Series A investments are applying what internal memos increasingly call a moat confidence score to early-stage decks — an informal assessment of how defensible the core technical mechanism is against well-resourced competitors. Buzzword-led Solution Slides score poorly on this assessment by default, because the analyst cannot extract a defensibility argument from a phrase that applies to the entire market.

Here is what the discount looks like in practice:

"AI-powered reconciliation platform" — Moat Confidence Score is Low as the mechanism is unspecified, the Analyst Action is to flag for clarification, and the Partner Stage Reached is Rarely.

"Reconciliation engine using supervised classification trained on 4.2M proprietary cases" — Moat Confidence Score is High as both the mechanism and data moat are visible, the Analyst Action is to advance to partner, and the Partner Stage Reached is Consistently.

"LLM-native workflow automation" — Moat Confidence Score is Low as it is a category description only, the Analyst Action is to pass or request resubmit, and the Partner Stage Reached is Rarely.

"Prompt-routing layer that reduces LLM API cost by 62% for high-volume enterprise queries" — Moat Confidence Score is High with a specific outcome and specific mechanism, the Analyst Action is to advance to partner, and the Partner Stage Reached is Consistently.

The pattern is absolute: the moment a VC can see what the AI does and why that specific thing is hard to replicate, the conversation shifts from "is this real?" to "how big can this get?" — which is the conversation that produces term sheets. The median Series A pre-money in the US currently sits at $22M–$28M. At that valuation level, a partner needs to defend the moat argument to their investment committee. "AI-powered" is not a moat argument. It is a category membership claim that every competitor in the space can make on the same afternoon.

The cost of the buzzword is not just a softer reception in the room. It is a compressed pre-money and an extended diligence cycle, because the partner now needs additional meetings to extract the defensibility argument you failed to put on the slide.

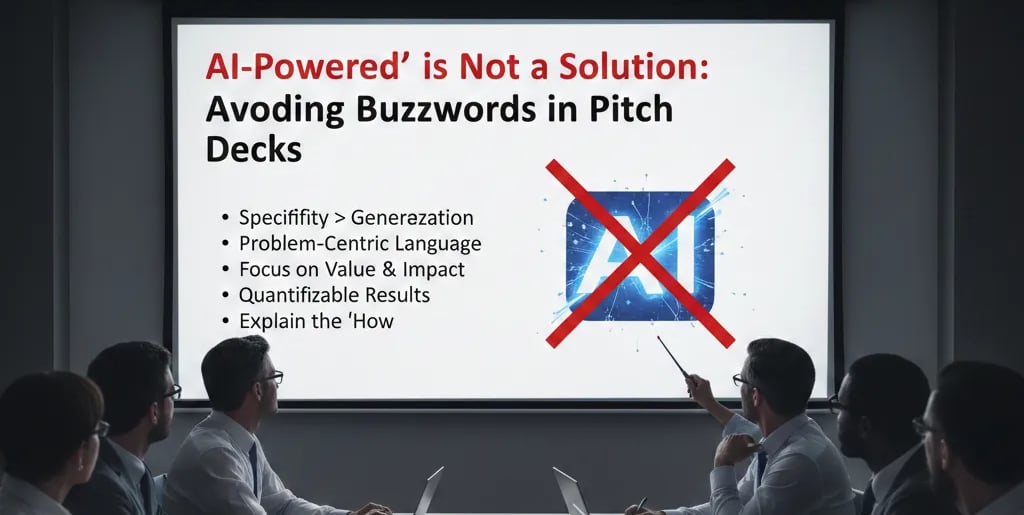

The Mechanism Specificity Protocol: Replacing Buzzwords With Claims a VC Can Actually Model

The fix is not to remove the AI reference. AI may be genuinely central to your product's defensibility. The fix is to replace the categorical claim with a mechanism-specific statement that passes what experienced analysts call the Substitution Test: could a non-AI version of this product produce a comparable outcome? If yes, the AI is an implementation detail, not a moat. If no, explain precisely why — and that explanation is your Solution Slide.

Use the Three-Clause Mechanism Framework to rebuild any buzzword-dependent solution statement:

Clause 1 — What the AI specifically does (one technical action, not a category label) Not "uses machine learning" — "classifies invoice exceptions using a supervised model."

Clause 2 — What that specific action produces (a measurable output, not a benefit) Not "improves accuracy" — "reduces misclassification rate from 14% to 0.3%."

Clause 3 — Why that output is structurally difficult to replicate (the moat signal) Not "proprietary AI" — "trained on 4.2M labelled exceptions accumulated across 60 enterprise deployments over six years."

The three clauses can be compressed into a single sentence. They do not require separate bullet points. The goal is not length — it is specificity at every layer.

Weak Version: "Our AI-powered platform automates financial operations for enterprise teams, delivering real-time insights and reducing manual workload through intelligent process automation."

What the AI does: ✗ absent — "intelligent process automation" is a category claim

What it produces: ✗ absent — "reducing manual workload" is not a metric

Why it is hard to replicate: ✗ absent entirely

Analyst note: Indistinguishable from 40 competing decks. Buzzword-dependent. Pass.

VC-Ready Version: "Our supervised classification model identifies invoice mismatches that rule-based systems miss — cutting exception rates from 14% to 0.3% — trained on 4.2M proprietary edge cases that took six years of enterprise deployment to accumulate."

What the AI does: ✓ supervised classification of invoice mismatches

What it produces: ✓ 14% → 0.3% exception rate

Why it is hard to replicate: ✓ six years, 4.2M cases, 60 enterprise deployments

Analyst note: Specific mechanism. Quantified outcome. Data moat with time-to-replicate signal. Advance.

One additional rule on adjacent buzzwords: "Machine learning-driven," "LLM-native," "GenAI-enabled," and "intelligent automation" all fail the same test as "AI-powered" if they are used as standalone descriptors without a mechanism clause attached. The label is irrelevant. The mechanism is everything.

Apply the Substitution Test to every technical claim on your Solution Slide before the deck goes out: If I replaced the AI with a well-trained human team or a rules-based system, would the outcome change significantly? If the honest answer is no, your AI is a cost-efficiency play, not a moat — and your solution statement should be written accordingly, because a VC who figures that out during diligence will feel misled by a slide that implied otherwise.

Three Structural Mistakes Founders Make When Cleaning Buzzwords From Their Solution Slide

1. Replacing one buzzword with another. Swapping "AI-powered" for "proprietary algorithm" without specifying what the algorithm does is a lateral move. "Proprietary" is not a mechanism. It is a legal classification. The analyst will note the absence and move on.

2. Over-specifying the technical architecture at the expense of the business outcome. Founders who correctly identify that they need to show mechanism specificity sometimes overcorrect by turning the Solution Slide into a technical whitepaper. If a non-technical partner cannot extract the customer outcome from your mechanism description in one read, the slide has failed in the opposite direction. Clause 2 — the measurable output — must remain visible and prominent.

3. Assuming the demo call will fix the buzzword problem. Most founders who know their Solution Slide is buzzword-heavy plan to clarify verbally in the pitch. At Series A in 2026, the deck is pre-screened by an analyst before it reaches a partner. The analyst does not get a verbal clarification. If the slide fails the analyst's read, it does not reach the meeting where you planned to explain it.

What Mechanism Specificity Does to Your Position at the Term Sheet Table

A Solution Slide that names the mechanism, quantifies the output, and signals the data moat does something specific to the partner's internal calculus: it converts a diligence question into a diligence confirmation. The partner is no longer asking "what does the AI actually do?" — they are asking "how replicable is this data asset?" — which is a later-stage question that presupposes conviction. That shift in question type is the difference between a founder who is being evaluated and a founder who is being valued. For the complete system connecting buzzword-free solution framing to the Problem Slide logic that makes it credible, the complete Problem and Solution Slides framework for Series A founders covers the full architecture.

The 16 VC-Quality AI Prompts inside the $5K Consultant Replacement Kit include a dedicated prompt sequence that runs the Three-Clause Mechanism Framework and the Substitution Test against your current Solution Slide language — returning a rewritten statement with mechanism specificity, quantified outcome, and moat signal embedded. The full Kit is $497. Use the prompt set built to remove every buzzword a Series A analyst will flag.

"AI-powered" describes a feature. A mechanism, an outcome, and a moat describe a business. VCs fund the latter.

Funding Blueprint

© 2026 Funding Blueprint. All Rights Reserved.